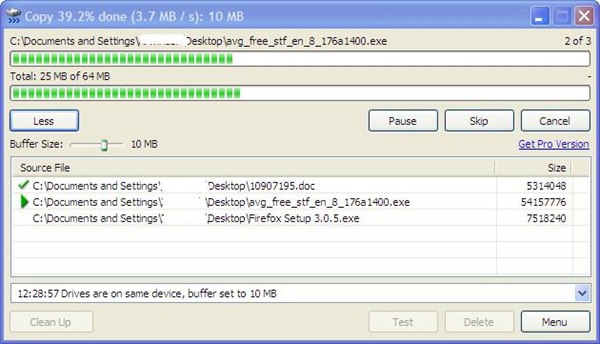

Then I can create the set of copy commands (or just get the source and destination paths) Select 'xcopy /d /y '|| T2.Dir_FullPath || '\\' || ID || '.ext ' || T3.Dir_FullPath || '\\' || ID || '.ext*' Do you have any ideas that may help me? What can I do with a file containing hundreds of source and destination paths to make a copy of all of these? Is there a faster alternative to xcopy? or a faster date check and then call any copy command?Ī more detailed explanation on how I currently get and copy files based on DB information: So, then I tried to use "xcopy" to avoid overwrite if date is the same, by using the /D switch and /Y switch to silently confirm the prompt, but in practice, a huge script with hundreds of thousands of xcopy commands takes forever and I don't see any speed-up when some or most of these files already exist with the same date. I tried at first a simple huge cmd script checking if the file, per resulting path, existed in the destination path and then trying to copy if it didn't exist, but the modified files were skipped.

How can I perform this copy in the fastest way possible? It is possible that these paths point to a file that has been previously been copied, but has been modified.

Such process identifies a set of files and constructs a proper source and destination path from several fields of different tables in DB to then copy all of these files, so I end up with potentially hundreds of thousands or millions of paths. I have a process that is run every month or so.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed